I Gave My 580-Note Obsidian Vault a Health Checkup

It had 102 structural problems. A Python script found and fixed them all in one run.

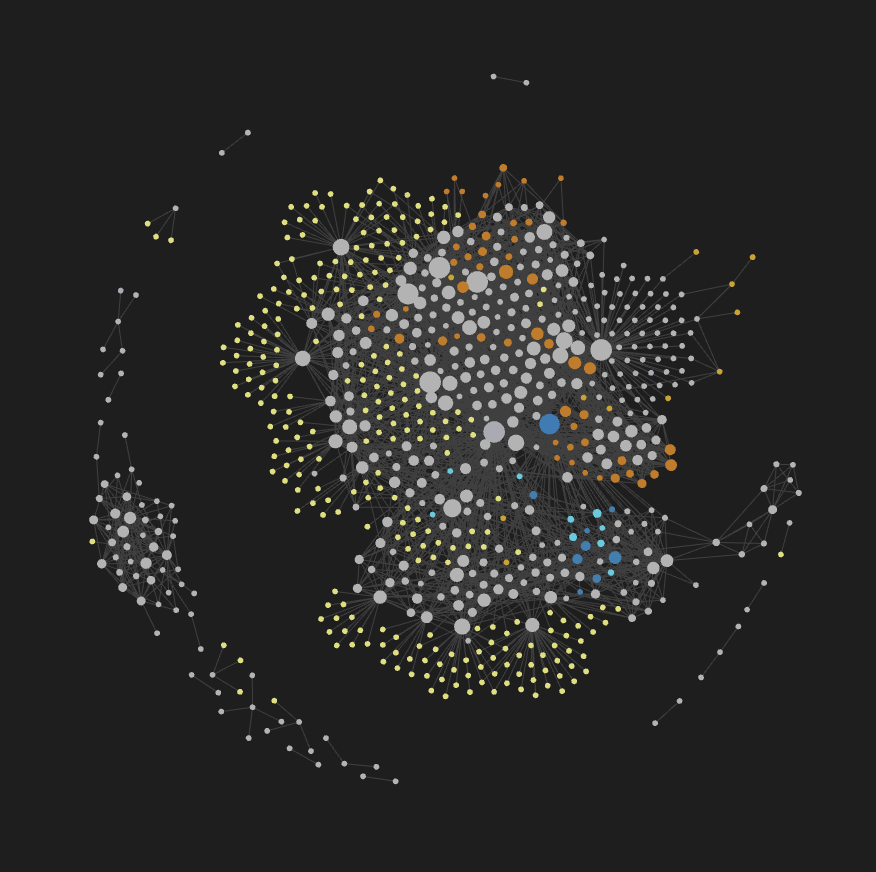

My Obsidian vault hit 580 notes. I’d been linking concepts for months, feeling pretty good about my “second brain.” Then I started noticing problems: stuff I’d definitely written about, but couldn’t find by following links. Notes that led nowhere. Topics that should be connected but weren’t.

Gut feeling wasn’t enough. I wanted numbers. So I wrote a script to give my knowledge base a proper checkup.

It found 102 problems. Then it fixed all of them.

What Does a “Healthy” Knowledge Base Look Like?

Think of your vault like a city’s road network. A good city has:

- Neighborhoods — related streets cluster together (your notes on React hooks link to state management, useEffect, component lifecycle)

- Highways — you can get from any neighborhood to any other in a few turns, not twenty

- Multiple routes — if one road is closed, you can still get where you need to go

Network scientists call this a small-world network. It’s the same structure behind social networks (“six degrees of separation”), the internet, and your brain’s neural pathways. Tight local clusters, short global paths, a few key hubs connecting everything.

There’s a single number that captures this — the small-world coefficient σ:

σ = (C / Crandom) ÷ (L / Lrandom)

C is the clustering coefficient — how tightly your neighborhoods stick together. L is the average path length — how many hops to get between any two notes. You divide each by what a random graph of the same size would produce. σ > 1 = small-world. Higher is better.

My vault scored σ = 9.67: clustering 12× higher than random, path lengths only 1.2× longer. The big picture was fine. But the details were a mess.

What the Checkup Found

The script analyzed two things: what my notes say (content) and how they’re connected (structure). Here’s what came back.

21 pairs of notes that should know about each other, but don’t

The script builds a TF-IDF vector for every note — a fingerprint of which words matter most in each one — then computes the cosine similarity between every pair. When two notes score above 0.5 (meaning they share significant vocabulary) but have no link between them, that’s a gap in your knowledge web. I had 21 of these — notes about the same concept written weeks apart, each unaware the other exists.

20 dead ends

Notes you can arrive at, but can’t leave. You follow a link, land on the page, and… there’s nowhere to go next. Twenty of my notes were like cul-de-sacs with no exit.

8 orphan notes

The opposite problem: notes that link out to other things, but nothing links to them. The only way to find them is to search by name. If you don’t remember the exact title, they’re invisible.

15 single points of failure

This was the scariest finding. In graph theory they’re called articulation points — nodes whose removal splits the graph into disconnected pieces. In plain English: fifteen notes where, if you deleted just that one note, entire topic areas would become unreachable from each other. Imagine a bridge that’s the only connection between two halves of a city — lose it, and you can’t get across.

My worst offender was a note called system-architecture. It had the highest betweenness centrality in the entire graph — meaning more shortest paths ran through it than any other node. Every route between frontend and infrastructure topics depended on it. One note, holding the whole thing together.

5 topic silos with almost no cross-links

Using community detection (modularity optimization), the algorithm found five natural topic clusters in my vault. The problem: they were barely connected to each other. Each cluster was a self-contained island. You could navigate freely within “deployment,” but getting from “deployment” to “data modeling” meant hopping through the same overloaded hub notes every time.

23 broken links

Notes pointing to raw file paths ([[report.pdf]]) instead of actual concepts ([[quarterly-review]]). These create phantom nodes in the graph — destinations that don’t really exist.

What the Script Fixed

Diagnosis without action is useless. The script runs six repair passes, from most critical to least:

- Bypass routes around every single-point-of-failure. For each dangerous hub note, connect two of its neighbors directly. Now if the hub disappears, there’s still a path. 51 bypass links added.

- Fix the 23 broken links. Map each bad link to its correct target. Done.

- Give every orphan note an incoming link. Find the most relevant connected note and add a backlink. 8 orphans rescued.

- Connect the 21 “should-be-linked” pairs. Add bidirectional links between notes that discuss the same topic but didn’t reference each other. 13 pairs connected (the rest fell below the confidence threshold).

- Cross-reference near-duplicates. Instead of merging similar notes (which loses nuance), add “see also” links so you know both exist. 1 cross-reference.

- Build bridges between topic silos. Pick the most important note in each cluster and connect it to the most important note in the next cluster. 6 bridges built.

Total: 102 fixes, one run, zero manual editing.

Before and After

| Before | After | |

|---|---|---|

| Total links | 2,720 | 2,808 |

| How tightly related notes cluster | 0.193 | 0.219 (+14%) |

| Avg hops between any two notes | 3.51 | 3.36 (-4%) |

| Overall health score | 9.67 | 10.54 (+9%) |

| Missing links found | 21 | 7 |

| Broken links | 23 | 0 |

| Orphan notes | 8 | 0 |

Tighter clusters, faster navigation, backup routes everywhere. The vault went from “works if you already know where everything is” to “you can actually discover things by browsing.”

What I Took Away

- Every knowledge base rots if you don’t maintain it. Bottlenecks, dead ends, and orphans are the natural byproduct of organic growth. You won’t notice until it’s already bad.

- The scariest problems are invisible. I had no idea 15 of my notes were single points of failure. It felt fine. The numbers told a different story.

- Machines catch what humans miss. Two notes about the same topic, written in different words, weeks apart? I’d never have connected them manually. The algorithm did it in seconds.

- “Organizing my notes” can be engineering, not vibes. Measure, diagnose, fix, re-measure. Same loop as any other system.

我的知识库长到 580 条笔记后,我给它做了一次体检

查出 102 个结构问题,一个脚本全修好了。

两个月前写过一篇文章,提到我用 Obsidian 的双向链接给 AI 助理建了一套网状索引。

用了两个月,知识库膨胀到 580 条笔记。规模上去了,体验却在下降 — 明明写过的东西,顺着链接到不了;有些笔记写完之后再没被任何地方引用过;还有几条笔记,一旦出问题,一整片知识都会断联。

靠直觉整理了几次,越整越乱。需要量化。于是写了个脚本给知识库做体检。

结果查出 102 个问题。然后脚本把它们全修了。

什么叫「健康」的知识库?

把知识库想象成一个城市的交通网:

- 小区内部道路密 — 相关概念之间互相连接(React hooks 链到状态管理、useEffect、生命周期)

- 有高速公路 — 从任何一个小区到另一个小区,几步就能到,不用绕大圈

- 多条路线 — 一条路封了,还有别的路可以走

网络科学管这叫小世界网络。社交网络里的「六度分隔」、互联网的路由、大脑的神经回路,底层都是这个结构。

有一个数字专门衡量这件事 — 小世界系数 σ:

σ = (C / Crandom) ÷ (L / Lrandom)

C 是聚类系数 — 邻居之间互相连接的紧密程度。L 是平均路径长度 — 任意两条笔记之间要跳几步。把各自除以同等规模随机图的期望值,就得到 σ。σ > 1 = 小世界,越大越健康。

我的知识库得分 σ = 9.67:聚类是随机图的 12 倍,路径只长了 1.2 倍。宏观结构没问题。但细节全是坑。

体检查出了什么

脚本分析两个维度:笔记「写了什么」(内容)和笔记之间「怎么连的」(结构)。

21 对笔记讲同一件事,却互相不知道

脚本给每条笔记构建一个 TF-IDF 向量 — 相当于一份用词指纹 — 然后计算每对笔记之间的余弦相似度。两条笔记得分超过 0.5(说明用词高度重叠)但之间没有任何链接,就是知识网的断裂。我有 21 对这样的笔记,往往是隔了几周分别写的,写的时候完全忘了另一条的存在。

20 条死胡同

你顺着链接走进去,然后…没有出口了。20 条笔记指向别人,但自己一个出链都没有。到了就走不出来。

8 条孤儿笔记

反过来的问题:有出链,但没有任何地方链到它们。除非你记得标题、主动搜索,否则永远不会发现它们的存在。

15 个单点故障

这是最吓人的发现。图论里叫割点(articulation point)— 移除后整个图会断成两块的节点。说人话就是:15 条笔记,删掉其中任何一条,整片主题区域就会断联。想象一座桥是两个城区之间唯一的通道 — 桥塌了,两边就彻底不通。

最危险的是一条叫 system-architecture 的笔记,全图介数中心性(betweenness centrality)最高 — 意味着经过它的最短路径比任何其他节点都多。前端和基础设施之间的所有路径都经过它。一条笔记,扛着整个知识库的连通性。

5 个主题孤岛

通过社区检测(modularity optimization),算法把知识库自动分成了 5 个主题区。问题是:区与区之间几乎没有链接。你可以在「部署」这个主题里自由导航,但想从「部署」走到「数据建模」,每次都要经过那几个已经超载的枢纽笔记。

23 条坏链接

有些笔记链接到了文件路径([[report.pdf]])而不是实际的概念节点([[季度复盘]])。这些链接指向不存在的目标,在图谱里制造了一堆幽灵节点。

脚本修了什么

诊断不修等于白做。脚本按严重程度跑了六轮修复:

- 给每个单点故障建旁路。把危险枢纽的两个邻居直接连上,枢纽挂了也有备用路线。加了 51 条旁路。

- 修复 23 条坏链接。把错误路径映射到正确的概念节点。全部修完。

- 给 8 条孤儿笔记找到入口。找到和它最相关的笔记,加一条反向链接。孤儿清零。

- 连接「应该互相知道」的笔记对。内容高度相似但没有链接的,自动加双向链接。连了 13 对(剩下的低于置信阈值,没动)。

- 给近似重复的笔记加「另见」。不合并(怕丢细节),加一条交叉引用让你知道还有一条类似的。1 条。

- 在主题孤岛之间架桥。每两个主题区之间,选各自最重要的笔记,连一条链接。6 座桥。

合计 102 处修复,一次运行,零手动编辑。

修复前后对比

| 修复前 | 修复后 | |

|---|---|---|

| 链接数 | 2,720 | 2,808 |

| 相关笔记之间的紧密度 | 0.193 | 0.219 (+14%) |

| 任意两条笔记之间的平均跳数 | 3.51 | 3.36 (-4%) |

| 整体健康分 | 9.67 | 10.54 (+9%) |

| 遗漏链接 | 21 | 7 |

| 坏链接 | 23 | 0 |

| 孤儿笔记 | 8 | 0 |

关系更紧密,导航更快,关键节点全有备用路径。知识库从「你得记住东西在哪才能找到」变成了「顺着链接随便走就能发现新东西」。

几个收获

- 知识库不维护一定会退化。瓶颈、死胡同、孤儿笔记是自然生长的必然产物,不会自己消失。

- 最危险的问题是你看不见的。我完全不知道有 15 条笔记是单点故障,用起来「感觉还行」。数字说的是另一回事。

- 机器能发现人发现不了的关联。两条笔记讲同一件事,但用了不同的词,隔了几周写的 — 我不可能手动比对出来,算法秒级搞定。

- 「整理笔记」可以是工程,不必是玄学。测量、诊断、修复、再测量 — 和其他系统的维护没有区别。